The AI capex supercycle: $700 billion bet, and what it means for your portfolio

Every time Microsoft or Google announces another quarter of record spending, markets react, sometimes rewarding them, sometimes punishing them. At the same time, most investors have no clear framework for what’s actually happening or why it matters to them. The biggest capital deployment in the history of private technology is reshaping which companies win, which sectors get rewired, and how exposed your portfolio is to a cycle that is either the infrastructure buildout of the century or the most expensive arms race in corporate history.

That’s why we built Winvesta Crisps, to break down what’s actually moving markets, in plain language, before the consensus catches up. 60,000+ investors from all over India are already in. What about you?

🔔 Don’t miss out!

Add winvestacrisps@substack.com to your email list so our updates never land in spam.

Four companies. One earnings season. A combined capital expenditure on track to exceed $600 billion and potentially approach $700 billion for 2026, up roughly 60 to 80% from the year before, based on sell-side compilations after Q1 results. That is Microsoft, Alphabet, Amazon, and Meta collectively committing to spend more in a single year than the entire US energy sector spends on drilling, extracting, refining, and delivering energy to the entire American economy, by most analysts’ estimates.

This is the AI capex supercycle. And if you own a US equity index fund, a handful of large-cap tech names, or virtually any broad ETF with meaningful technology exposure, you are already in it, whether you know it or not. The question is whether you understand what you are actually holding.

The bull case is that we are watching the infrastructure layer of the next twenty years of the global economy being assembled in real time, comparable to the railroads of the 1880s or the broadband boom of the 1990s, but moving faster and at a scale neither of those precedents approached. The bear case is that we have seen this pattern before, and the builders of the infrastructure did not always turn out to be the winners.

This edition of Winvesta Crisps breaks down the mechanics, the historical context, who actually profits, the risks that most retail investors are not pricing correctly, and what it means in practice for Indian investors holding US equities.

🏗️ How we got here: The infrastructure arms race that snuck up on everyone

To understand why 2026’s spending numbers feel almost surreal, it helps to see how quickly the ramp happened.

As recently as 2022, the combined capital expenditure of five major US hyperscalers, Alphabet, Amazon, Meta, Microsoft, and Oracle, totalled around $162 billion, according to Epoch AI's analysis of SEC filings. By 2025, that figure had nearly tripled to roughly $448 billion. By late 2025, those same companies were spending over $130 billion in a single quarter. The inflexion point was mid-2023, when generative AI shifted from an experimental feature to an existential competitive priority, and the spending curve went nearly vertical.

The catalyst was ChatGPT’s commercial explosion and the ensuing recognition, felt viscerally in every hyperscaler boardroom, that compute infrastructure would be the moat that determined which companies controlled the next generation of software, cloud, and advertising. The fear of falling behind outweighed the cost of building ahead. That asymmetric logic is what drives arms races, and this one has the balance sheets to sustain it.

By Q1 2026 earnings, the latest round of guidance showed just how far that logic has run. Sell-side estimates put the combined 2026 capex for the major hyperscalers at $600 to $700 billion, with meaningful upside risk. Multiple analysts now expect combined spending to approach or cross $1 trillion by 2027. Goldman Sachs projects cumulative AI capex across compute, data centres, and power in the range of several trillion dollars between now and the early 2030s. This figure varies significantly depending on chip replacement cycles and power assumptions.

To put those numbers in human terms: Amazon’s 2026 capex commitment alone exceeds the annual investment budget of the entire US energy sector. This is no longer a technology story. It is an economic infrastructure story.

⚙️ What is all this money actually buying?

When hyperscalers say they are spending hundreds of billions on AI infrastructure, the capital flows into a surprisingly specific set of physical assets. Understanding the stack matters because it tells you where the money actually lands, and who captures it.

Compute: GPUs and custom silicon.

The core unit of AI infrastructure is the accelerator, a processor purpose-built for the parallel computations that AI training and inference demand. NVIDIA currently captures roughly 90% of AI accelerator spending, per industry estimates, making it the single most direct beneficiary of every hyperscaler capex dollar. Its GB300 and upcoming Vera Rubin platforms are the backbone of virtually every major AI cluster being assembled right now.

But all four hyperscalers are simultaneously investing in custom silicon, Google’s TPU, Amazon’s Trainium, Microsoft’s Maia, and Meta’s MTIA, partly to reduce dependence on NVIDIA, and partly to lower unit economics for specific workloads. Amazon’s custom silicon business has crossed a $10 billion annual run rate. The custom chip story is real, but it is also years away from threatening NVIDIA’s overall position; AI workload demand is growing faster than custom chip capacity can scale.

Memory: The bottleneck nobody talks about

High-bandwidth memory, or HBM, has quietly become one of the most critical constraints in the entire AI buildout. It sits between the GPU and the data it processes; more HBM means faster, more capable AI systems. Industry analysts expect the HBM market to grow by multiples in 2026 as AI infrastructure demand accelerates, with SK Hynix controlling the majority of supply and prices spiking sharply over the past year. Microsoft’s CFO called out rising memory costs as a significant driver of the company’s 2026 capex increase, giving one of the clearest public signals that component inflation is now a material factor in hyperscaler spending plans.

Data centres and power: The physical constraint that will define the decade

Every GPU cluster needs a building, a cooling system, and enormous amounts of electricity. Global data centre electricity consumption is projected to roughly double between 2022 and the end of the decade, per IEA estimates, driven primarily by AI workloads. Hyperscalers are signing long-dated power purchase agreements with nuclear and renewable energy providers at a pace that would have been unimaginable three years ago. Meta signed a large nuclear PPA with Vistra, and Google has made significant direct investments in power generation capacity. The physical grid is now the binding constraint, not chip supply, not software, not demand. New data centre projects face multi-year delays due to shortages of transformers, switchgear, and grid capacity.

The energy angle is not a sideshow. It is where the capex cycle is now most constrained, and where the next set of investment winners is forming.

The dynamics covered in this article affect every US stock in your portfolio. Trade from India on the Winvesta app. No US bank account needed!

🚀 Join 60,000+ investors, become a paying subscriber or download the Winvesta app and fund your account to get insights like this for free!

🏆 Winners, losers, and the picks-and-shovels map

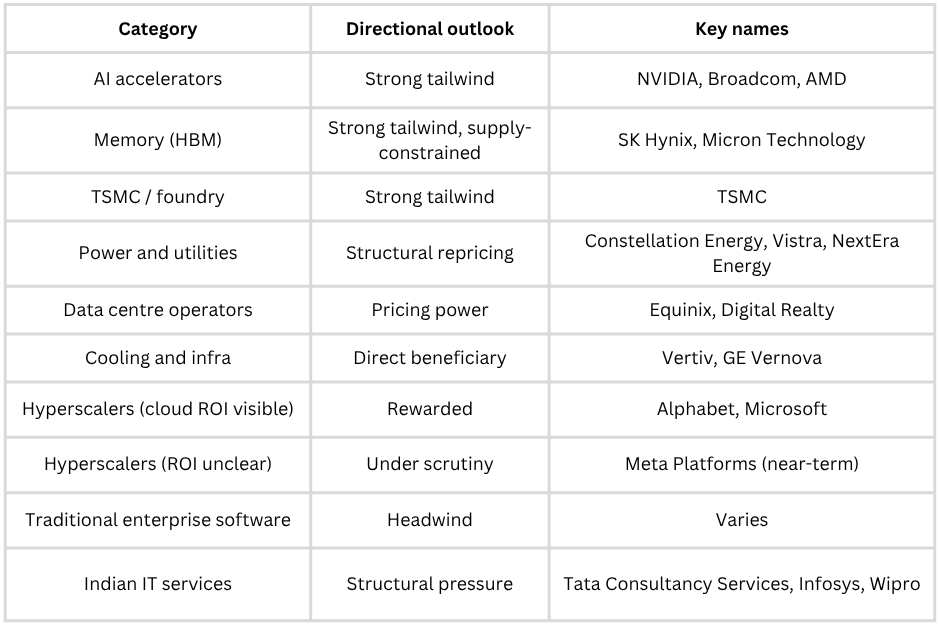

Not all stocks benefit equally from a $700 billion capital spending cycle. The sector map is more differentiated than most headline coverage suggests.

Clear structural winners

Semiconductors capture the largest share of each capex dollar. NVIDIA is the most direct, but Broadcom (custom chip design and networking), TSMC (which manufactures chips for NVIDIA, AMD, Apple, and practically everyone else), and AMD all sit in the direct line of hyperscaler spending. TSMC’s advanced-node order pipeline is deepening as hyperscaler capex surges; it does not matter which chip architecture ultimately wins, because TSMC fabricates them all.

Memory is the current bottleneck trade. SK Hynix and Micron are the primary beneficiaries of HBM demand, with prices elevated and supply committed well into 2027. Memory-intensive compute workloads, particularly AI inference at scale, show no sign of reducing their appetite.

Power and utilities have been quietly repriced. Data centres are projected to double their electricity consumption roughly by 2030, accounting for just under 3% of global electricity use, driven largely by AI workloads. Utility companies like Constellation Energy and NextEra are no longer just defensive yield plays; they are high-demand infrastructure providers in an AI-driven power cycle. GE Vernova, which supplies gas turbines and grid solutions, has rallied strongly over the past year as data centre power demand reshapes the utility investment thesis.

Data centre operators like Equinix and Digital Realty benefit from falling vacancy rates and pricing power on renewals. In core markets, vacancy has dropped below 3%.

Cooling and infrastructure companies that specialise in high-density data centre cooling systems have seen revenues and valuations surge in response to hyperscaler demand.

Companies under pressure

Traditional enterprise software companies that have not successfully integrated generative AI into their products are seeing customers reallocate budgets toward compute-heavy AI solutions. Software businesses relying on legacy perpetual-licence or maintenance models face an accelerating risk of displacement.

Indian IT services (discussed in detail below) face a structural double threat: US clients tightening technology discretionary budgets. At the same time, AI coding agents and automation tools begin to compress the labour-cost arbitrage that underpins the traditional outsourcing model.

The nuanced middle: The hyperscalers themselves

The hyperscalers are simultaneously the largest spenders and the largest beneficiaries, but investor sentiment is diverging based on which ones are converting capex into visible revenue growth. The Q1 2026 earnings season illustrated this clearly. Alphabet’s Google Cloud reported strong double-digit revenue growth, with its enterprise backlog expanding sharply, a concrete positive demand signal.

Microsoft’s AI-related business reported very strong year-on-year growth, with analysts estimating the AI revenue contribution now in the mid-annualised millions. Those results were rewarded. Meta’s stock dropped despite 33% revenue growth, punished for raising capital, and pushed to a near-term monetisation timeline for its AI infrastructure. The market is differentiating: show visible ROI, get rewarded; spend without a visible return, face the penalty box.

Illustrative directional framework. Not investment advice. Actual outcomes depend on individual company execution.

🇮🇳 The India angle: A two-sided story

For Indian investors, the AI capex supercycle is not just a story about US tech stocks. It reaches Indian domestic markets in direct, consequential ways.

The threat to Indian IT: structural, not cyclical

The Nifty IT index fell approximately 19% in the first months of 2026, and Indian IT companies lost close to $50 billion in market value in the first two quarters of the year, per market data. This is not a valuation re-rating or a macro mood swing. It reflects a genuine and growing investor concern that the traditional Indian IT business model, with large teams of offshore engineers delivering software services at a labour-cost advantage, faces a structural threat from AI automation.

Agentic AI tools are beginning to compress marginal costs for tasks that Indian IT firms have historically been paid to do: code reviews, software testing, legacy system maintenance, and documentation. The risk is not that Indian IT disappears overnight; large enterprise transformation deals still require human delivery. But the growth trajectory of the next decade looks very different from the last three.

The companies best positioned within Indian IT are those pivoting toward AI implementation and enterprise transformation services rather than volume-based delivery. The companies most exposed are those with high concentrations of the work that AI tools are replacing fastest.

The opportunity: Indian talent and infrastructure are in demand

The same AI infrastructure buildout that pressures Indian IT’s legacy model is also creating real demand for Indian engineering talent. Hyperscalers and their supply chain partners are expanding hiring in India for AI infrastructure roles, data engineering, and cloud platform work. India’s pool of engineering graduates gives it a structural advantage in capturing a share of this demand, but only if the workforce and the companies pivot toward higher-value AI work.

India is also becoming a meaningful destination for data centre investment. The AI buildout’s power and land requirements are driving hyperscalers to look beyond the US, Japan, and Europe for capacity. India’s rapidly expanding digital infrastructure, improving power grid reliability in key technology corridors, and large domestic cloud demand make it an increasingly viable location for regional AI infrastructure.

What this means for your US portfolio

If you own a broad US equity index fund, you are already meaningfully exposed to the AI capex supercycle. Companies directly tied to AI, including the major hyperscalers, NVIDIA, Broadcom, and adjacent names, now represent roughly a quarter to nearly a third of broad US equity indices. This weight has grown dramatically over the past decade. A passive US equity allocation is not a diversified AI bet; it is a concentrated AI bet.

The dollar dimension matters too. A period of sustained tech-driven earnings strength in the US can support the dollar, which compresses your rupee-denominated returns when you eventually convert back. If the AI cycle produces a correction, whether from capex fatigue, disappointing ROI, or a market de-rating, dollar weakness could follow, further compressing returns in rupee terms. Currency exposure is not separable from the AI capex story; it amplifies the swing in both directions.

🧭 What to watch: The signals that actually matter

The AI capex cycle produces a steady stream of headlines. Most of them are noise. Here is what actually moves the needle.

Cloud revenue growth at the three major platforms

AWS, Azure, and Google are realising the revenue realisation channels for all the infrastructure being built. If these three are growing revenue faster than capex, the bull case is intact. If revenue growth decelerates while capex continues to rise, the market will start pricing in ROI risk. Watch quarterly cloud growth rates closely; they are the leading signal for whether the spending is converting.

Capex guidance revisions

Hyperscalers have raised capex guidance in every recent earnings season. A downward revision, particularly from Meta, which faces the most investor scrutiny on ROI, would trigger a broad sector selloff. An upward revision from Alphabet or Microsoft accompanied by strong backlog data is a confirmation signal for the bull case.

Memory prices and HBM availability

HBM supply is committed through 2026, and pricing is elevated. A meaningful loosening of supply, or evidence of demand destruction from custom silicon reducing HBM requirements per workload, would indicate the bottleneck is easing. That is deflationary for the memory trade but potentially positive for overall AI economics.

Power deal announcements

Nuclear power purchase agreements, grid interconnection approvals, and data centre permitting news have become material market events for utility and infrastructure stocks. The pace of power deal signings tells you how aggressively hyperscalers are planning their 2027 and 2028 build.

Free cash flow trajectories

This is the bear indicator to watch. Amazon’s FCF is projected to turn negative in 2026 as capex outpaces revenue. Alphabet’s FCF could compress dramatically. If FCF compression becomes severe and sustained without a corresponding acceleration in cloud revenue, the market will price in a reckoning. Watch quarterly FCF alongside revenue; the gap between them tells you how much runway the current spending pace has.

Custom silicon milestones

Amazon’s Trainium, Google’s TPU, and Meta’s MTIA are being deployed at real scale now. If performance benchmarks for custom silicon begin to displace NVIDIA GPUs in specific workload categories, it would affect NVIDIA’s pricing power and the overall cost of AI compute, both of which are meaningful market events.

If this changed how you see the AI story in your portfolio, pass it on.

🏁 The bottom line

The AI capex supercycle is the most consequential capital deployment story in technology since the broadband buildout of the late 1990s, and it is happening faster, at a larger scale, and with companies that are actually profitable this time. That last point matters enormously. Unlike the dot-com era, where most infrastructure builders had no revenue, today’s hyperscalers are generating real cloud revenue, real operating cash flows, and real enterprise demand signals. Cloud backlog figures from the major hyperscalers, while not directly comparable across companies, are growing rapidly and signal genuine contracted future demand, not speculation.

But history is also unambiguous about one uncomfortable truth: the builders of transformational infrastructure do not always win. The railroad companies of the 1880s built the arteries of the American economy, only to go bankrupt. The telecom companies of the 1990s built fibre internet, and only internet.nl lost 92% of its equity value. The technology endured. Many of the shareholders did not.

The honest assessment for 2026: the bull case has stronger evidence than the bear case, but the bear case has stronger historical precedent. That is not a reason to panic out of tech exposure. It is a reason to think carefully about concentration, about which parts of the AI stack you own, and about whether your exposure is positioned toward companies with visible ROI today or toward those still spending on hope.

For Indian investors in US equities, the practical framework is this. If you own broad US index funds, you are already deeply in the AI capex cycle, whether you intended to be or not. Consider whether adding explicit picks-and-shovels exposure, semiconductors, power infrastructure, and memory gives you better positioning across the cycle than pure hyperscaler concentration. Watch the cloud revenue numbers each quarter as your leading indicator of cycle health. And think about the currency dimension: a cycle that keeps running boosts the dollar and compresses your rupee returns; a correction does the opposite.

The AI capex supercycle is not a theme to trade around. It is a structural force reshaping the composition of the US equity market, the global power grid, the future of Indian IT, and the economics of virtually every company built on software. Understanding it puts you ahead of the investors reacting to earnings headlines without knowing what game is actually being played.

By the numbers

On track to exceed $600 billion, potentially approaching $700 billion, combined 2026 capex for the major hyperscalers, up roughly 60 to 80% from 2025, per sell-side estimates and analyst compilations after Q1 earnings

Several trillion dollars, the order of magnitude Goldman Sachs and other research firms project for cumulative AI infrastructure spend through the early 2030s, covering compute, data centres, and power; the precise figure is highly sensitive to chip replacement cycles and power assumptions

Mid-tens of billions, analyst annualised revenue contribution as of early 2026, more than doubling year on year; the clearest near-term proof of spending converting to revenue

Rapidly expanding, cloud backlog figures across major hyperscalers, all growing meaningfully quarter on quarter; a real demand signal, though not directly comparable across companies

Significant multiples of growth, projected HBM memory market expansion in 2026 vs 2025; the single most acute supply bottleneck in the AI infrastructure stack, with SK Hynix controlling the majority of supply

Roughly a quarter to nearly a third, the estimated share of broad US equity indices now represented by AI-linked companies, a weight that has grown substantially over the past decade; a passive US index fund is a concentrated AI bet

~16 to 19% YTD, and roughly 25 to 30% off its 52-week high, the range of declines in India’s Nifty IT index in the early months of 2026, depending on the measurement date, is a direct market signal of AI’s structural pressure on the traditional outsourcing model

Disclaimer: All content provided by Winvesta India Technologies Ltd. is for informational and educational purposes only and is not meant to represent trade or investment recommendations. Remember, your capital is at risk. Terms & Conditions apply.