Intel (INTC): The chip giant’s AI foundry gamble

Picking a semiconductor stock meant tracking PC shipments and server upgrade cycles. Not anymore. The AI infrastructure buildout has redrawn the entire chip map—fab geography, government subsidies, and foundry strategy now matter as much as chip design.

That’s why we built Winvesta Crisps, to break down what’s actually driving the companies you own, in plain language, before the consensus catches up. 60,000+ investors from all over India are already in. What about you?

🔔 Don’t miss out!

Add winvestacrisps@substack.com to your email list so our updates never land in spam.

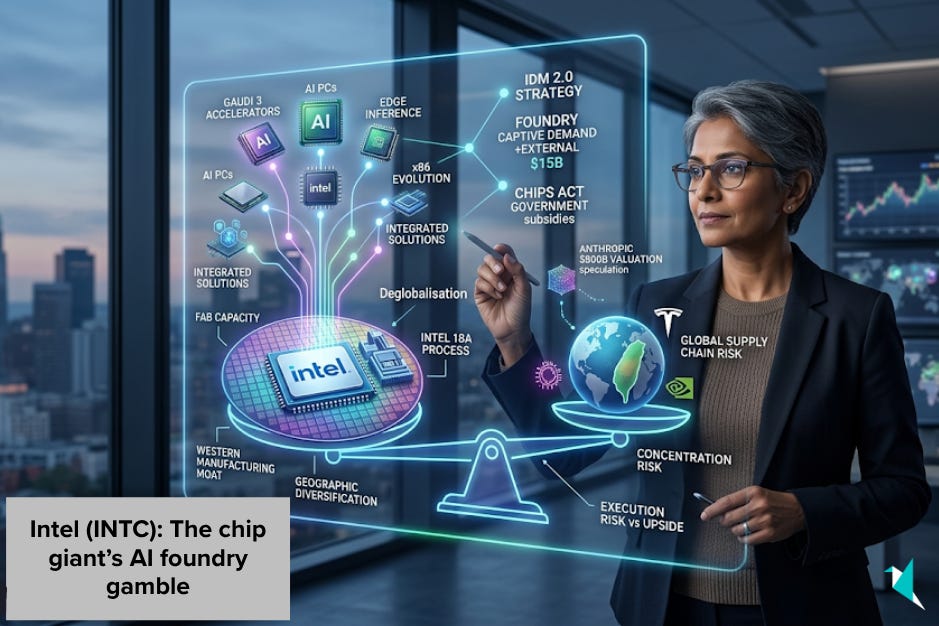

Intel isn’t just a chipmaker anymore—it’s attempting one of the most audacious corporate transformations in semiconductor history. While the world watches NVIDIA mint money from AI accelerators and TSMC dominate contract manufacturing, Intel is simultaneously trying to reclaim process technology leadership, build a world-class foundry business, and capture the AI chip market share. The company that once defined Moore’s Law now finds itself racing to catch up on multiple fronts—and the stakes have never been higher.

Pat Gelsinger’s Intel straddles a difficult paradox: it is the company most exposed to America’s semiconductor ambitions, yet it remains the one that must prove it can execute. Intel’s “IDM 2.0” strategy—designing and manufacturing its own chips while opening its fabs to outside customers—is a bet that the AI era needs a Western-hemisphere alternative to TSMC. If Intel's 18A process technology underperforms, if foundry customers walk, or if AI chip share stays stubbornly in the low single digits, the entire transformation thesis collapses. This article examines Intel’s evolving business mix, the foundry strategy, the AI opportunity, and whether the execution risks are worth the potential upside.

🧩 Business model 2.0: From integrated chipmaker to foundry-plus-products

Intel’s revenues now come from four distinct engines rather than just PC processors and data centre chips—a restructuring that reflects CEO Pat Gelsinger’s IDM 2.0 vision.

Client computing group

The legacy PC and laptop processor business generated approximately $32 billion in revenue in 2025, per Intel’s earnings release, down modestly year-over-year from its recent peak. The segment includes Core Ultra processors with integrated AI engines and represents Intel’s consumer-facing brand strength. Margins remain healthy, helping fund the company’s ambitious reinvestment programme.

Data centre and AI

Intel’s server chip business pulled in approximately $16.9 billion in 2025—about $17 billion—up roughly 5% year-over-year despite intense competition from AMD’s EPYC and custom ARM chips from cloud providers, per Intel’s earnings release. The segment now includes Gaudi AI accelerators—Intel’s answer to NVIDIA’s data centre GPUs—acquired through Habana Labs. Growth has been anaemic compared to rivals, but Intel is banking on Gaudi 3 and upcoming Falcon Shores chips to recapture share in AI training and inference workloads.

Intel foundry

This is the transformation’s centrepiece. Launched in 2021 and now reported as a separate business unit, Intel Foundry aims to manufacture chips for external customers—directly competing with TSMC and Samsung. Intel reports $17.8 billion in Intel Foundry revenue in 2025, though this is largely offset by $17.7 billion in intersegment eliminations, per Intel’s filings; the foundry is still predominantly serving Intel’s internal chip designs. External lifetime bookings have reached approximately $15 billion in committed deal value, including agreements with Microsoft and the U.S. Department of Defence. Intel is building fabs in Arizona, Ohio, and Germany, backed by tens of billions of dollars in prospective U.S. and European subsidies, including large CHIPS Act allocations. The strategy: leverage process technology improvements—including the Intel 18A node arriving in 2025—to attract customers who want geographic diversification away from Taiwan.

Mobileye and other

The autonomous vehicle technology unit and the programmable chip business (formerly Altera) add diversification, contributing roughly $3 billion in combined revenue. Mobileye went public in 2022, but Intel retained majority ownership.

In other words, “Intel” is now a foundry operator with a captive chip design business and AI ambitions—not just a PC processor company clinging to past dominance.

🌪️ AI infrastructure buildout as a tailwind: Capacity constraints meet geopolitical reshoring

The explosion in AI model development has created unprecedented demand for semiconductor manufacturing capacity and advanced chips—a dynamic that plays directly into Intel’s reinvention narrative.

Foundry capacity scarcity

TSMC’s leading-edge fabs are running near capacity, with waiting lists for 3nm and 2nm nodes stretching into 2027. Hyperscalers like Microsoft, Amazon, and Google are designing custom AI chips but need foundry partners outside Taiwan. Intel’s Arizona and Ohio fabs—once they reach volume production on Intel 18A (1.8nm-class process)—offer a Western alternative. Microsoft has already committed to using Intel Foundry, signalling that “good enough and geographically diverse” may trump “absolute bleeding edge” for some customers.

AI accelerator proliferation

NVIDIA’s H100 and H200 GPUs dominate AI training, but inference workloads are fragmenting across diverse silicon architectures. Intel claims up to 50% better price-performance for certain large language model workloads on its Gaudi 3 accelerators versus prior generations. As inference deployment scales—Anthropic’s Claude, OpenAI’s GPT models, Meta’s Llama—cost-per-inference becomes critical. Intel is betting that “good enough” AI chips at lower cost will capture share once the training boom matures into inference at scale.

Sovereign chip strategies

Europe and the U.S. are pouring subsidies into domestic semiconductor manufacturing. Intel’s German fab project and Ohio complex align perfectly with this trend. Governments want resilient supply chains; Intel offers a politically palatable vehicle to achieve that, even if industry observers estimate Intel still trails TSMC’s leading edge by roughly a year or more—though the gap has narrowed considerably.

For a firm that earns margins on both manufacturing services and chip sales, AI-driven capacity scarcity and geopolitical reshoring mean pricing power and strategic relevance could return after years in the wilderness.

🤖 AI integration everywhere: From Gaudi accelerators to Core Ultra inference

Intel is not just benefiting from the AI wave—it is embedding AI capabilities across its entire product stack, from cloud to edge.

Gaudi data centre accelerators

Intel’s Gaudi 2 and Gaudi 3 chips target AI training and inference in hyperscale data centres. Gaudi 3, launched in 2025, delivers what Intel claims is up to 4x the training performance of Gaudi 2 on certain benchmarks, with integrated HBM memory providing high bandwidth for large model workloads. Intel argues that the total cost of ownership is lower than NVIDIA's for certain inference tasks, citing memory bandwidth efficiency and lower power consumption. Early adopters include Stability AI and Hugging Face. While various estimates put NVIDIA’s share of AI training accelerators at well over two-thirds of the market, with Intel’s Gaudi in the low single digits, Intel is positioning Gaudi as the “open ecosystem” alternative—supporting PyTorch, TensorFlow, and standard AI frameworks without proprietary lock-in.

Core Ultra AI PC chips

Intel’s client processors now include integrated neural processing units (NPUs) capable of 10–34 TOPS (trillion operations per second) for on-device AI workloads. Windows 11’s Copilot features, local LLM inference, and real-time video effects run on these NPUs without cloud connectivity. As models shrink and edge inference grows, Intel’s vast installed base of x86 PC chips globally becomes a distributed AI platform—a different competitive arena than data centre GPUs.

Xeon with AMX and accelerators

Intel’s latest Xeon server CPUs embed Advanced Matrix Extensions (AMX) for accelerating AI inference alongside traditional compute workloads. The Sierra Forest and Granite Rapids Xeon lines, shipping in 2024–2025, allow customers to run mixed workloads—databases, web services, and AI inference—on the same processors without discrete accelerators. For enterprises unwilling to redesign infrastructure around GPUs, this “AI everywhere” approach offers incremental adoption.

For Intel, AI is both a competitive positioning imperative and a tangible feature set woven into silicon across cloud and edge—not a single-product bet.

Want to add Intel to your portfolio? Trade INTC directly from India on the Winvesta app. No US bank account needed!

🚀 Join 60,000+ investors—become a paying subscriber or download the Winvesta app and fund your account to get insights like this for free!

🕵️♀️ Intel Foundry Services: An under-the-radar potential unlock

One of Intel’s most strategic—and least understood—initiatives sits far away from its branded Core and Xeon chips: the foundry business itself.

Keep reading with a 7-day free trial

Subscribe to Winvesta Crisps to keep reading this post and get 7 days of free access to the full post archives.